CBS New York: AI voice‑cloning scams can clone a loved one in minutes to extort payments

CBS New York demonstrates how 30 seconds of audio can produce convincing voice clones used by scammers to impersonate family members or officials. Reporters outline practical steps to verify identity and avoid being tricked by cloned voices.

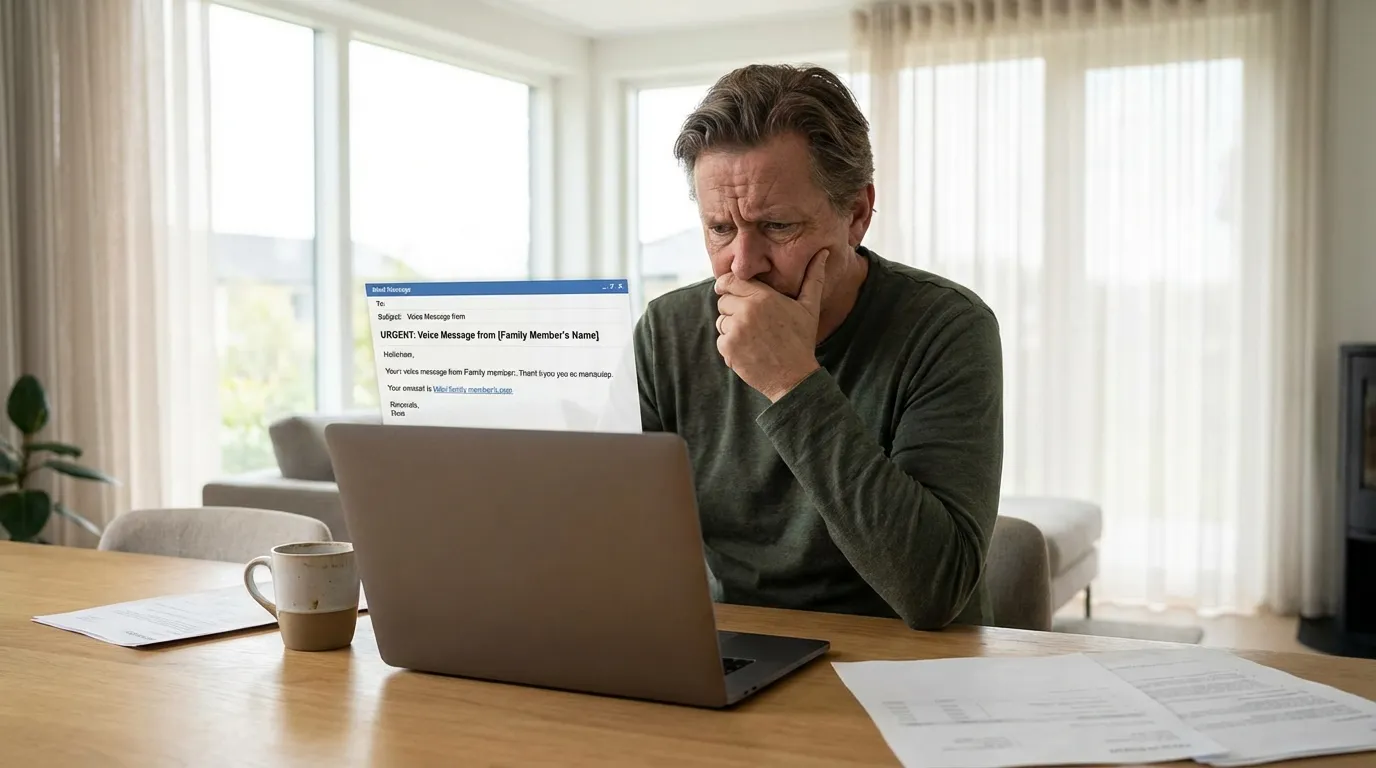

A CBS New York investigation shows how rapidly available AI tools can clone voices with as little as 20–30 seconds of audio, enabling scammers to impersonate relatives, friends or authority figures to extract money or sensitive information. The report includes demonstrations where cloned audio was used to create believable emergency pleas and fake directives, illustrating the emotional leverage scammers exploit to bypass rational scrutiny. Experts interviewed advise multiple verification steps: call back on a known number, ask questions only the real person would know, request a live video, and avoid sending money or transferring cryptocurrency on demand. Law enforcement warns that voice cloning will increasingly be paired with social engineering, deepfake video, and phishing to create multi‑modal cons that are harder to detect. Consumers are advised to be skeptical of urgent audio requests, enable two‑factor authentication on accounts, and report impersonation attempts to local police and platforms where the scam originated. The story highlights the urgency for platforms, regulators and consumers to adopt authentication measures and public awareness campaigns.

Related Scam Types

Related Articles

Hiya Report: 1 in 4 Americans Received AI Deepfake Voice Calls, Scammers Outpacing Carriers

Study finds deepfake-enabled fraud occurring on an 'industrial scale', AI Incident Database