Voice‑Cloning Scams Remain a Growing Threat, Experts Warn

While no single new, massive voice‑deepfake incident was reported in the past 48 hours, advisories note an uptick in voice‑cloning fraud generally. Consumers and businesses are urged to verify requests using out‑of‑band methods.

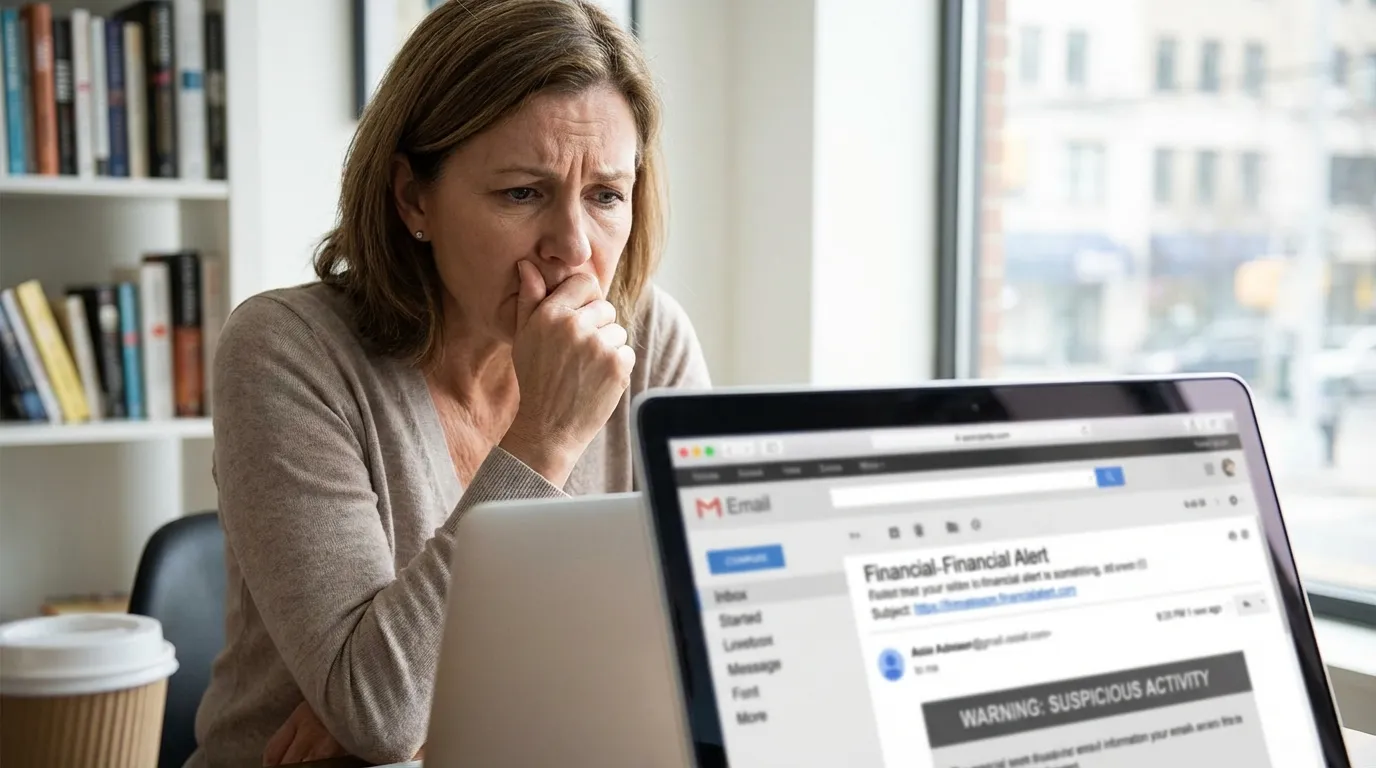

Recent advisories and prior documented cases continue to show voice‑cloning scams as an escalating fraud vector, even though no single large, new incident was reported in the last 48 hours. Scammers increasingly use AI to synthesize realistic voice replicas — impersonating executives, family members, or service agents — to authorize payments, extract credentials, or manipulate victims into transferring funds. Because synthesized voices can be blended with social engineering scripts and real contextual details, victims may be convinced to act without independent verification. Experts advise organizations to implement strict verification protocols for financial authorizations (call‑back procedures, code words, multi‑channel confirmation) and to train staff to treat unsolicited voice requests skeptically. Consumers should limit publicly available voice samples, scrutinize unexpected calls that request money or credentials, and use two‑factor authentication where possible. Regulators and tech firms are also urged to invest in detection tools, watermarking, and policy measures to curb abuse while balancing legitimate uses of generative audio technology.

Related Articles

Hiya Report: 1 in 4 Americans Received AI Deepfake Voice Calls, Scammers Outpacing Carriers

Study finds deepfake-enabled fraud occurring on an 'industrial scale', AI Incident Database